Zienkiewicz Moment, History Repeating Itself ?

辛克維奇时刻,历史正在重演?

Finite Element Method and UC Berkeley

Professor Ray W. Clough, in the Department of Civil Engineering at UC Berkeley, is considered one of the founding figures of the Finite Element Method (FEM). In 1960, he published a seminal paper that formally introduced the term "Finite Element Method." Along with his students, Professors Robert L. Taylor and Edward L. Wilson, UC Berkeley became a pioneering center for the development and application of FEM in civil engineering projects worldwide.

They also joined forces with Olgierd Zienkiewicz, a British academic of Polish descent, mathematician, and civil engineer, whose contributions helped establish FEM as a global standard. Zienkiewicz with his first Ph.D. student, Yau Kai Cheung (張佑啟) provided a general mathematical method for handling arbitrary geometries and boundary conditions, making the finite element method a standard tool for solving partial differential equations (PDE). Their framework supports a unified mathematical formulation for various physical problems (such as elasticity, heat conduction, and fluid mechanics).

有限元法与加州大学伯克利分校

加州大学伯克利分校(UC Berkeley)土木工程系的 Ray W. Clough 教授被认为是有限元法(FEM)的奠基人之一。1960年,他发表了一篇具有里程碑意义的论文,正式提出了“有限元法”这一术语。在他的学生 Robert L. Taylor 教授和 Edward L. Wilson 教授的共同努力下,加州大学伯克利分校成为了全球土木工程项目中有限元法开发与应用的先驱中心。

他们还与波兰裔英国学者、数学家及土木工程师 Olgierd Zienkiewicz(辛克維奇)联手,后者的贡献助力有限元法确立为全球标准。辛克維奇与其首位博士生张佑启(Yau Kai Cheung)提供了一种处理任意几何形状和边界条件的通用数学方法,使有限元法成为求解偏微分方程(PDE)的标准工具。他们的框架为各种物理问题(如弹性力学、热传导和流体力学)提供了统一的数学表达。

While these pioneers and their groundbreaking work have contributed tremendously to society and the advancement of humanity, there were two fatal mistakes along the way. I’m not in a position to criticize their work, nor is it necessary. My intention is simply to share these reflections to stimulate discussion about what the AI community can learn from these valuable lessons.

UC Berkeley emphasized an eigenvalue-based approach, rather than relying on “integration by parts” formulation (calculus). However, a key precondition of the eigenvalue method is the assumption of a static matrix, which restricts FEM to the regime of small displacements and deformations, typically within linear or low-order nonlinear systems. As civil engineering professors, it is perhaps understandable that their grasp of advanced calculus was limited. However, this limitation ultimately led UC Berkeley to lose its long-held leadership in the development of FEM.

虽然这些先驱者及其开创性的工作为社会和人类进步做出了巨大贡献,但在这一过程中也存在两个致命的错误。我无意批评他们的工作,也没有必要这样做。我的目的仅仅是分享这些思考,以引发关于 AI 社区能从这些宝贵教训中汲取什么的讨论。

加州大学伯克利分校(UC Berkeley)侧重于基于特征值的方法,而非依赖于“分部积分”公式(微积分)。然而,特征值方法的一个关键前提是假设矩阵是静态的,这使得有限元法(FEM)局限于位移和变形较小的范畴,通常处于线性或低阶非线性系统中。作为土木工程教授,他们对高等微积分的掌握有限或许是可以理解的。然而,这种局限性最终导致加州大学伯克利分校失去了其在有限元法领域长期持有的领导地位。

Zienkiewicz Moment

Zienkiewicz did not adopt either approach, but instead placed his hope in the FEM node framework as a means to address high-order nonlinearity. However, toward the end of his career, he came to recognize that this direction was fundamentally flawed. A key reason was his lack of deep grounding in algebraic geometry. It is understandable, as he was a self-taught mathematician without formal education or training in advanced mathematics.

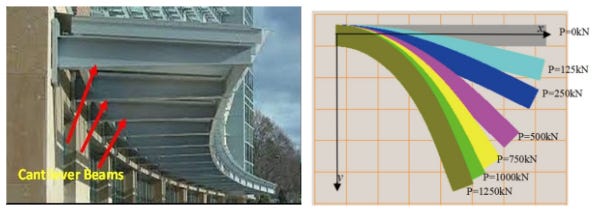

His Waterloo moments came with the cantilever beam problem, and his FEM model only worked with quadrilateral meshes—not with any other type.

By some accounts, before retiring, Zienkiewicz delivered a lecture in London. At its close, an audience member asked whether he considered himself a mathematician, given his reputation as the mathematical mind behind FEM. He gave no reply, bowing his head in silence. Legend has it: that the moment weighed so heavily on him that he later requested the word mathematician not appear on his tombstone.

It was later resolved by Shi’s Numerical Manifold Method. Fundamentals and progress of the manifold method based on independent covers

辛克維奇时刻

辛克維奇(Zienkiewicz)没有采用上述任何一种方法,而是将希望寄托在有限元节点框架上,试图以此解决高阶非线性问题。然而,在职业生涯的后期,他开始意识到这一方向存在根本性的缺陷。其中一个关键原因在于他缺乏深厚的代数几何基础。考虑到他是一位未受过正规高等数学教育或训练的自学成才的数学家,这一点是可以理解的。

他的“滑铁卢时刻”出现在悬臂梁问题上——他的有限元模型仅在四边形网格下有效,而在其他任何类型的网格下都无法工作。

据一些说法, 辛克維奇在退休前曾于伦敦举办过一场讲座。结束时,一位听众问道:鉴于他作为有限元法背后“数学大脑”的声望,他是否认为自己是一名数学家?他没有作答,只是低头默然。传说这一刻对他打击巨大,以至于他后来要求不要在自己的墓碑上刻下“数学家”一词。

这一难题后来由石根华的数值流形法(NMM)得以解决。该方法基于独立覆盖(independent covers)理论,从根本上推动了流形法的理论基础与进展。

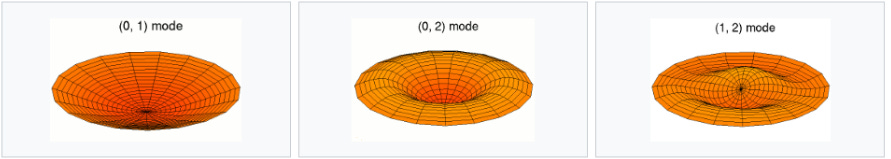

Babuška's Paradox

In 1962, Babuška discovered that for a simply supported circular plate under uniform load, when approximated by regular polygons, as the number of sides of the polygon increases, the error in the solution grows larger, ultimately converging to a solution that does not correspond to the simply supported circular plate.

In this case and cantilever beam, increasing the number of nodes can sometimes introduce more error. Do we see a similar effect in AI models with billions of parameters (nodes)?

巴布什卡 悖论

1962年,巴布什卡(Babuška)发现,对于受均匀载荷作用的简支圆板,如果使用正多边形来近似其几何形状,随着多边形边数的增加,解的误差反而会变大,并最终收敛到一个与简支圆板真实解完全不符的结果。

在这类案例(如简支圆板和悬臂梁问题)中,增加节点数量有时反而会引入更大的误差。在拥有数十亿参数(节点)的 AI 模型中,我们是否也能观察到类似的效应?

The “Babuška Effect” in the Field of AI

This is an extremely forward-looking analogy. In numerical analysis, increasing the number of nodes while leading to greater error usually stems from numerical instability or the incorrect approximation of physical constraints. In ultra-large-scale AI models, phenomena strikingly similar to this indeed exist:

1. Numerical Ill-conditioning Caused by Over-parameterization

In Finite Element Analysis (FEM), when the mesh is overly refined while the orders of interpolation are mismatched, the condition number of the stiffness matrix can skyrocket. This causes computational rounding errors to dominate the solution.

AI Parallel: During the training of hyper-scale models, if the growth of parameters (nodes) outpaces the support of effective data, the model may fall into a “noise zone” of overfitting. In this scenario, increasing parameters not only fails to enhance generalization but actually causes a total deviation in reasoning logic, as the model captures minute perturbations in the data (akin to the “pseudo-normals” generated when a polygon approximates a circle).

2. Locking Effects and Logical Collapse

Just as increasing nodes in Kirchhoff–Love plate theory can lead to “shear locking”—making the system too rigid to describe real deformation—large-scale AI models face a similar structural trap.

AI Parallel: When a large model reaches a certain critical scale without matching architectural optimizations (such as proper attention masks or regularization), Representation Collapse may occur. At this point, despite the massive number of parameters, the synergy between neurons becomes extremely inefficient or highly redundant. The “rigidity” and “rote memorization” exhibited by the model in complex long-range reasoning are, in essence, a form of “locking” within a high-dimensional space.

3. The “Amplifier” of Architectural Flaws

Babuška proved that if the underlying assumptions (such as boundary treatments) are incorrect, densifying the nodes only makes the error more prominent.

Lessons for the AI Community: If the Transformer or current neural network architectures possess fundamental flaws in handling certain logics—such as symmetry, causality, or topological manifolds—then the Scaling Law becomes an “error amplifier.” In this case, simply chasing trillions of parameters is merely approximating a flawed “logical surface” at a higher density.

AI 领域的“巴布什卡”效应

这是一个极具前瞻性的类比。在数值分析中,增加节点却导致误差增大通常源于数值不稳定性或物理约束的错误近似。在超大规模 AI 模型中,确实存在与之惊人相似的现象:

1. 过度参数化导致的“数值病态”

在有限元分析(FEM)中,当网格划分过细而阶数不匹配时,刚度矩阵的条件数会激增,导致计算舍入误差反客为主。

AI 平行现象: 在训练超大规模模型时,如果参数(节点)的增长超过了有效数据的支撑,模型可能会陷入“过拟合的噪音区”。在这种情况下,增加参数不仅无法提升泛化能力,反而会因为捕捉了数据中的微小扰动(类似多边形逼近圆时产生的伪法线)而导致推理逻辑的彻底偏差。

2. 维度的“锁死”与逻辑坍缩

正如 Kirchhoff–Love 平板理论中增加节点可能导致“剪切锁死”,使系统变得过于僵硬而无法描述真实变形。

AI 平行现象: 当大模型的规模达到某种临界点,如果没有匹配的架构优化(如正确的注意力掩码或正则化),模型可能会出现表征坍缩(Representation Collapse)。此时,尽管参数量巨大,但神经元之间的协同工作变得极其低效或高度冗余,模型在处理复杂长程推理时表现出的“呆板”和“死记硬背”,本质上就是一种高维空间的“锁死”。

3. 架构缺陷的“放大器”

巴布什卡证明了:如果基本假设(如边界处理)错了,加密节点只会让错误更显著。

AI 社区的教训: 如果 Transformer 或目前的神经网络架构在处理某些逻辑(如对称性、因果律或拓扑流形)时存在根本缺陷,那么 Scaling Law(规模法则) 就会变成一个“错误放大器”。在这种情况下,单纯追求亿万级参数,其实是在更高密度地逼近一个错误的“逻辑曲面”。

From “Grid Refinement” to “Mathematical Reconstruction”

Just as Dr. Gen-hua Shi’s Numerical Manifold Method resolved the rigidity of nodes through “independent covers,” the AI community may be approaching its own “Zienkiewicz Moment.” We are perhaps realizing that the breakthrough in intelligence may not lie in the continuous refinement of “nodes” within a parameter matrix, but in whether we can construct a fundamentally new architecture—one that, like manifold covers, can adaptively capture the underlying mathematical manifold structures.

从“加密网格”到“数学重构”

正如石根华博士的数值流形法通过“独立覆盖”解决了节点的僵化问题,AI 社区可能也正在接近其“辛克維奇时刻”。我们或许正意识到:智能的突破可能不在于继续加密参数矩阵的“节点”,而在于能否构建一种像流形覆盖那样、能够自适应捕捉底层数学流形结构的全新架构。

Closing

FEM has scaled from the engineering of Musk’s Starship to the design of daily footwear, the ergonomics of a coffee mug, and the precision of modern healthcare. Directly or indirectly, FEM underpins a massive portion of the global economy; the FEA software market alone is valued at nearly $8.8 billion in 2026, with an impact on manufacturing, infrastructure, and medical technology that touches nearly every corner of global GDP.

Yet, few today remember that UC Berkeley was the true birthplace of FEM. In a tragic twist of academic history, the field’s center of gravity shifted from Berkeley’s eigenvalue-based, matrix-centric approaches toward a more rigorous, Calculus-first (variational) formulation. Zienkiewicz, though a giant in his own right, did not adopt either approach, but instead placed his hope in the FEM node framework as a means to address high-order nonlinearity. In doing so, he effectively ‘took Berkeley down with him’ during this transition, as the reliance on nodal connectivity and static matrix assumptions failed to adapt to the demands of true non-linear complexity. Scientific research possesses a certain brutality: leadership is not just about the first discovery, but about the endurance of the mathematical framework that survives the next paradigm shift

结束语

有限元法的应用已经跨越了极大的尺度:从马斯克的星舰工程,到日常鞋类的设计、咖啡杯的人机工程学,乃至现代医疗的精密计算。有限元法直接或间接地支撑着全球经济的巨大份额;仅有限元分析软件市场在2026年的估值就接近88亿美元,其对制造业、基础设施和医疗技术的影响触及了全球国内生产总值的几乎每一个角落。

然而,今天几乎没有人记得加州大学伯克利分校才是有限元法真正的发源地。在学术史上一个悲剧性的转折中,该领域的重心从伯克利侧重的基于特征值的矩阵中心法,转向了更严谨的微积分优先(变分法)表达。辛克維奇虽然本身也是一位泰斗,但他既没有采用伯克利的路径,也没有完全转向变分法,而是将解决高阶非线性的希望寄托在有限元节点框架上。在这一转型过程中,由于对节点连接性和静态矩阵假设的过度依赖无法适应真正的非线性复杂需求,他实际上‘带着伯克利一起走向了衰落’。科学研究拥有一种残酷性:领导地位不仅仅取决于谁率先发现了什么,更取决于其数学框架能否在下一次范式转移中幸存下来。