Single Token Geometry 01: Topology

单标的几何:拓扑

Mathematics is very bureaucratic; without an overall perspective, one could turn forever in the same unyielding room. Geometry bestows the eye that beholds all things from above, a very ladder to freedom.

数学是非常官僚的;若没有一个整体性的视角,人就可能永远在同一个坚硬而不通融的房间里兜转。几何赋予人一种自上而下观看万物的眼睛,它几乎就是通往自由的阶梯。

In AI research, dimensionality, representation, feature, and hidden space are discussed constantly, yet mostly as conceptual descriptors rather than as concrete geometric objects with computable form.

在 AI 研究中,维度、表征、特征以及隐藏空间被不断讨论,但大多仍停留在概念性描述的层面,而不是作为具有可计算形式的具体几何对象来加以刻画。

Dimensionality is determined by the local coordinate structure, the manifold’s definition, and the convergence behavior of its underlying topology.

维度性由局部坐标结构、流形的定义,以及其底层拓扑的收敛行为所决定。

A Representation is how dimensionality is expressed, all dimensions carry equal structure, a feature is what emerges when certain dimensions consistently express more than others.

表征是维度性的表达方式;当所有维度都承载同等结构时,它们共同构成表征,而当某些维度持续地比其他维度表达出更多内容时,特征便从中涌现出来。

A Feature is what survives representation, the measurable, distinguishable structure that persists. but a feature is not free from dimensionality. It is dimensionality made selective. The intrinsic dimensionality of the manifold sets the ceiling of how many independent features can exist

特征是那些在表征中得以存续的、可测量且可区分的结构。但特征并不独立于维度性而存在;它其实是被选择化了的维度性。流形的内在维度决定了能够存在多少相互独立特征的上限。

A Hidden Space is where representation goes beneath the observable, a latent structure that is not directly accessible, but from which observable features are generated.

隐藏空间则是表征沉入可观察性之下的地方,是一种无法被直接触及的潜在结构,但可观察到的特征正是由它生成出来的。

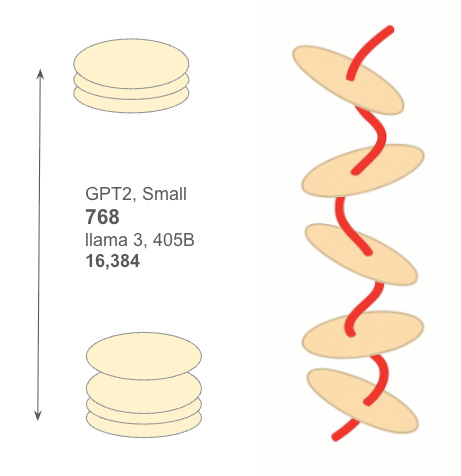

These are still good mathematical descriptors. What is the atomic meaning of each term, and how does each map to a single token’s geometry? The largest known token dimension of an open source model is Llama 3.1 405B at 16,384. Leading closed models are believed to be significantly higher, though undisclosed.

这些仍然是很好的数学描述符。每个术语最原子的含义到底是什么?它们又各自如何映射到单个单标的几何结构上?目前已知开源模型中最大的单标维度,是 Llama 3.1 405B 的 16,384。业界领先的闭源模型一般被认为还要高得多,只是尚未公开披露。

In the lens of Deep Manifold, each element of a token vector is a topological cover. A single token is a stacked manifold: layered pieces, like pancakes. The full token is not a flat list of numbers, but a structure built from local covers, each carrying its own geometry. Here n denotes the ambient dimension, namely the number of coordinates in the hidden state vector. In GPT-2 Small, n=768. Since each cover admits infinite degrees of freedom, a single token carries open-ended dimensional possibility. But possibility is not final dimensionality. Once the token is actually computed, dimension is resolved through the relation of two covers, and the single token settles to n−1.

在深度流形的视角下,单标向量中的每一个元素都可以看作一个拓扑覆盖。单个单标是一个堆叠流形:一层一层的片状结构,像煎饼一样。完整的单标并不是一串扁平的数字列表,而是由局部覆盖构成的结构,每一个覆盖都携带着自身的几何性质。这里的 n 表示环境维度,也就是隐藏状态向量中的坐标数。在 GPT-2 Small 中,n=768。由于每一个覆盖都允许无限的自由度,单个单标因而具有开放性的维度可能性。但可能性并不是最终维度性。一旦单标被实际计算出来,维度就通过两个覆盖之间的关系被解析出来,而这个单个单标最终稳定在 n−1。

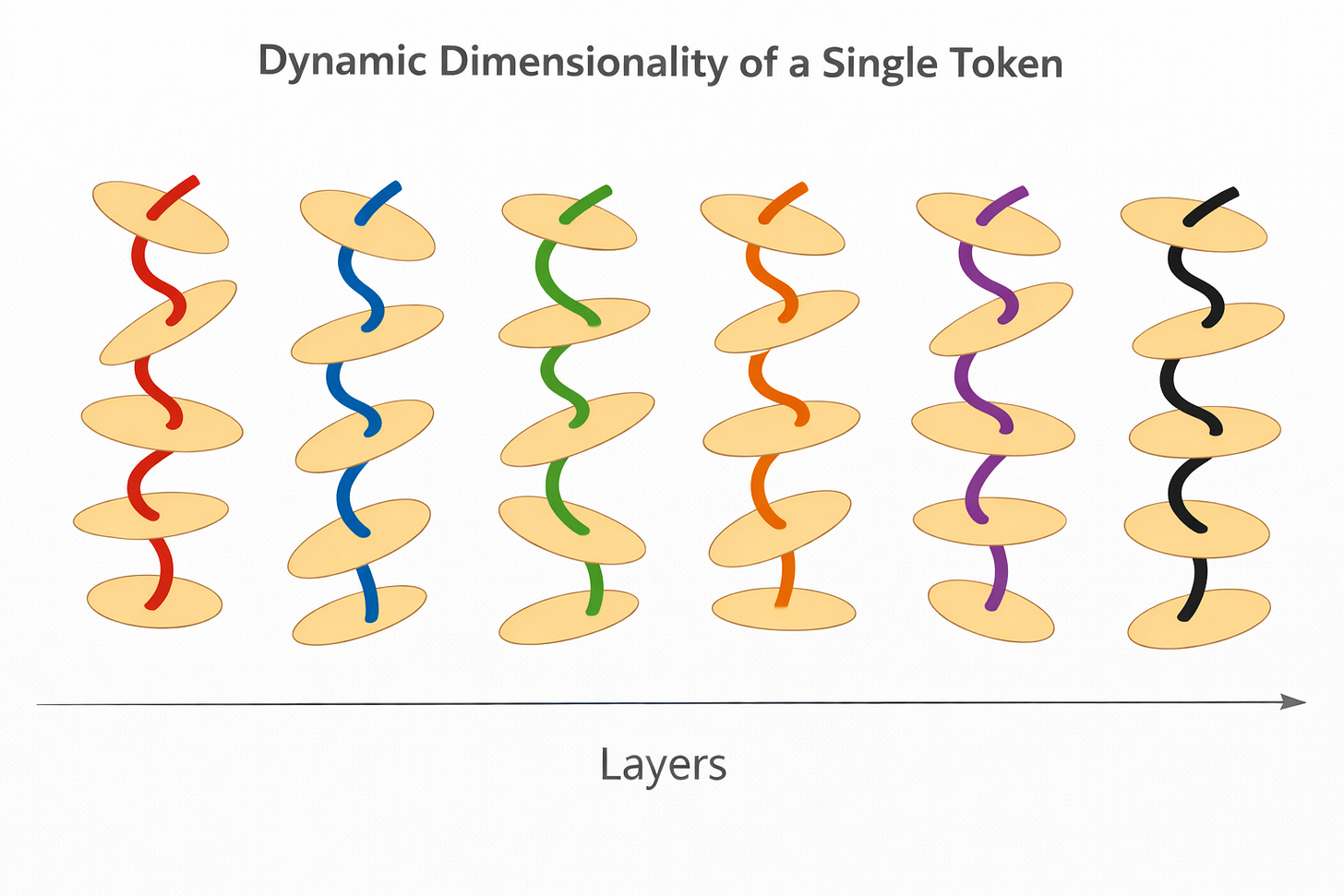

This settled dimensionality, however, is not static in the ordinary sense. A token is recomputed at every layer, and each recomputation occurs under a new local relation of covers. So while the single-token dimensionality resolves to n−1 at computation, that resolved form is dynamic across depth. The token persists, but it does not persist as a frozen geometric object. It persists through successive local resolutions.

然而,这种已经落定的维度性,并不是通常意义上的静态。单标会在每一层被重新计算,而每一次重新计算都发生在一种新的局部覆盖关系之下。因此,尽管单个单标的维度性在计算时会解析为 n−1,这种被解析出来的形式却会随着深度而动态变化。单标确实持续存在,但它并不是作为一个冻结不变的几何对象而持续存在的;它是在一次又一次连续的局部解析中持续存在的。

This is why the same token cannot be assigned one permanent dimensional identity throughout the network. At layer lll, the token is resolved under one configuration of continuation; at layer l+1, it is resolved again under another. The token remains the same token in sequence, but not the same geometric realization. In this sense, dimensionality is not merely bounded; it is continuously re-instantiated as the token moves through the stacked manifold.

这就是为什么,同一个单标在整个网络中不能被赋予一个永久不变的维度身份。在第L 层,这个单标是在一种延续构型下被解析出来的;而到了第 L+1层,它又会在另一种构型下再次被解析。序列中的单标仍然是同一个单标,但其几何实现却已经不是同一个了。从这个意义上说,维度性不仅仅是有界的;当单标在堆叠流形中移动时,它还在被持续地重新实例化。

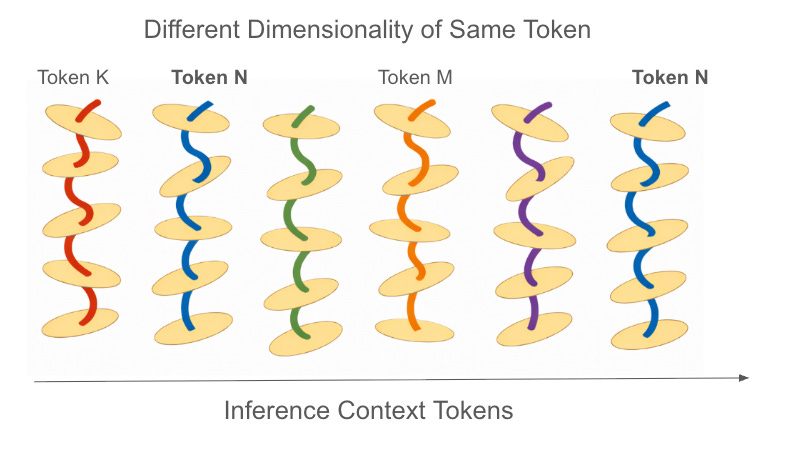

The same point holds even more strongly in context. At the final layer, a token at the same index position in the context window does not have one invariant realized dimension. Its final dimensional realization depends on the tokens before it, because those preceding tokens shape the continuation pathway by which it is computed. Thus, even when the ambient dimension remains fixed, the token’s realized geometry is context-dependent.

同样的道理,在上下文中体现得更为明显。在最后一层,处于上下文窗口中相同索引位置的同一个单标,并不具有一个不变的已实现维度。它最终的维度实现取决于它之前的那些单标,因为正是这些先前单标塑造了它被计算出来时所经历的延续路径。因此,即使环境维度保持不变,这个单标所实现出来的几何结构仍然是上下文依赖的.

In the lens of Deep Manifold, We reexamine the dimensionality, representation, feature and hidden space,

在深度流形的视角下,我们重新审视维度性、表征、特征与隐藏空间。

Dimensionality

Dimensionality is not a fixed coordinate count, but the locally resolved geometric freedom of a token under constraint and continuation. It is shaped by the relation between neighboring covers, by the admissible structure of the stacked manifold, and by how the token settles through computation. Ambient width only sets the surrounding room. Actual dimensionality is dynamically realized layer by layer, and remains context-conditioned even at the final layer.

维度性

维度性并不是一个固定的坐标计数,而是单标在约束与延续之下被局部解析出来的几何自由度。它由相邻覆盖之间的关系、堆叠流形的可容许结构,以及单标在计算过程中如何收敛与落定所共同塑造。环境宽度所给出的,只是周围空间的房间大小。真正的维度性则是在一层一层的计算中动态实现的,并且即使到了最后一层,仍然受到上下文条件的制约。

Representation

A representation is the geometric class into which a token falls once its dimensionality is resolved through the relation of neighboring covers under constraint. At the final layer, tokens with similar realized dimensionality can be grouped into the same representation, not because their vectors are identical, but because they satisfy a similar boundary condition, such as loss alignment. In Deep Manifold, representation is thus not a fixed hidden vector, but a constrained resolution of dimensionality under continuation.

表征

表征是这样一种几何类别:当单标的维度性在约束之下通过相邻覆盖之间的关系被解析出来之后,这个单标便落入其中。在最后一层,具有相似已实现维度性的单标可以被归入同一个表征之中;这并不是因为它们的向量完全相同,而是因为它们满足相似的边界条件,例如损失对齐。在深度流形的视角下,表征因此并不是一个固定的隐藏向量,而是维度性在延续过程中的受约束解析。

From the perspective of single-token topology, Deep Manifold concludes that dimensionality is not a static property of a token. It is resolved dynamically across layers under constraint, including the boundary condition associated with loss. Moreover, the same token can realize different dimensionalities when it appears at different positions in the context window, since each position is constrained by different preceding tokens. Dimensionality is thus dynamic not only during training, but throughout inference itself.

从单标拓扑的视角来看,深度流形认为,维度性并不是单标的一个静态属性。它是在约束之下、跨越各层被动态解析出来的,其中也包括与损失相关的边界条件。进一步说,同一个单标在上下文窗口中的不同位置上,可以实现出不同的维度性,因为每一个位置都受到其前序单标的不同约束。因此,维度性不仅在训练过程中是动态的,而且在整个推理过程中也始终是动态的。

In this sense, feature and hidden space may still retain conceptual value. But given the highly dynamic nature of single-token dimensionality, their precise signatures remain unclear, at least until that dynamic structure is more fully understood.

从这个意义上说,特征与隐藏空间或许仍然保留着概念上的价值。但鉴于单标维度性具有如此高度动态的性质,它们的精确表征仍然并不清晰,至少在这种动态结构被更充分理解之前是如此。

Dimensionality is determined by three things: local coordinate structure, manifold definition, and the convergence behavior of the underlying topology. Single Token Topology answers only the first question, namely the local coordinate structure. The other two:

computation on stacked piecewise manifolds

convergence through fixed-point progression

Which have been developed in Deep Manifold already. This essay is the first part of the Single Token Geometry series. The next part will be Single Token Geometry: Manifold.

维度性由三件事情共同决定:局部坐标结构、流形定义,以及底层拓扑的收敛行为。《单标拓扑》 只回答了第一个问题,也就是局部坐标结构。其余两部分:

在堆叠分片流形上的计算

不动点推进实现的收敛

都已经在深度流形中有所展开。本文是 “单标几何” 系列的第一篇。下一篇将是 《单标几何:流形》。

Single Token Geometry Series 单标几何系列

This is sick (in a good way)